Review: The Muppet Christmas Carol

ALSO ON CINELUXE

Sign up for our monthly newsletter

to stay up to date on Cineluxe

While not the Muppets’ strongest effort, this oddly faithful retelling of the Dickens tale is a satisfying experience in 4K on Disney+

by Dennis Burger

updated December 3, 2023

The Muppet Christmas Carol isn’t exactly the creative apex of the Muppets franchise. As the first film in the series to be made after the death of Jim Henson, it lacks a lot of the creator’s bohemian funkiness and marks the beginning of a transition for the Muppets in which they became a little more kid-friendly and a little less clever. (Although, to be fair, you could just as easily level some of the same criticism at The Great Muppet Caper.)

But—and this is a pretty huge “but”—it’s still my all-time favorite interpretation of Charles Dickens’ literary classic, just nudging out Richard Donner’s Scrooged and the excellent made-for-TV version from 1984 starring George C. Scott. A lot of that can be attributed to Michael Caine’s performance as Scrooge, in which he seems completely oblivious to the fact that his co-stars almost all have hands up their butts. Instead, he plays the role straight, leaving the winking and nodding mostly to Gonzo the Great, who plays the role of Dickens himself.

There’s also the lovely soundtrack, with songs written by Paul Williams, who didn’t quite turn in as many memorable ditties as he did for The Muppet Movie or Emmet Otter’s Jug-Band Christmas, but still gives the movie an extra heaping helping of charm.

Oddly enough, despite the songs and despite the puppetry, The Muppet Christmas Carol is a shockingly faithful adaptation of Dickens’ book, abridged though it may be. And as such, it’s a must-see for me every Christmas season.

But as with It’s a Wonderful Life, one must ask if this movie is actually worth owning. And for now—and only for now—I say probably not. That’s primarily because it’s available for free on Disney+—in Dolby Vision no less. The service was, as best I can tell, the first to offer The Muppet Christmas Carol in 4K, and although other digital providers have caught up, I can’t imagine it looking any better on any of those services than it does on Disney+.

The 4K resolution does very little to add detail or definition to the cinematography, and unless my eyes deceive me, the current 4K master wasn’t sourced from the original camera negative. It frankly looks like an upscale from an HD master taken from a print (or at best an interpositive), with the only noteworthy resolution differences coming in the form of enhanced (but very inconsistent) film grain.

The HDR does add a lot to the presentation, mostly by toning down the over-saturation seen in the HD version, leaving the most vibrant hues for those spots with pure primary colors, like the inside of Kermit’s mouth. The HDR also brings more consistency and subtlety to contrasts, making blacks a good bit more consistent and eliminating some crush.

So this is definitely the best The Muppet Christmas Carol has ever looked. But hang on. In recent weeks, it was actually revealed that the original camera negative for the deleted musical number “When Love Is Gone” have been discovered and would be included in a new ground-up 4K restoration of the film sourced from the original elements.

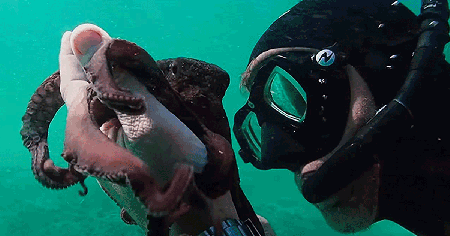

If you’re not familiar with “When Love Is Gone,” that’s probably because the song was cut from the theatrical version of the film at the insistence of Jeffrey Katzenberg and Disney, for fear that it was too emotionally sophisticated for a children’s film (something I can’t imagine Jim Henson ever allowing, but it was his son Brian’s cinematic directorial debut). The song was integrated into LaserDisc and VHS releases of the film, as well some DVD versions, but has disappeared from higher-quality releases due, one would assume, to quality concerns.

Whether you’re particularly interested in that song or not (for my money, it’s one of the film’s best, and thankfully it’s included as a deleted scene on Disney+ and elsewhere), the news that The Muppet Christmas Carol is getting a proper restoration is enough to warrant holding off on a purchase for now.

But if you’ve got Disney+, you should still add the movie to your holiday viewing rotation this year. For all its flaws, it’s still an incredibly charming children’s classic with tons of genuinely funny moments and some wonderful performances throughout, from humans and Muppets alike. And for what it’s worth, it’s the only cinematic adaptation of A Christmas Carol that has genuinely made me shed a tear over the death of Tiny Tim.

Dennis Burger is an avid Star Wars scholar, Tolkien fanatic, and Corvette enthusiast who somehow also manages to find time for technological passions including high-end audio, home automation, and video gaming. He lives in the armpit of Alabama with his wife Bethany and their four-legged child Bruno, a 75-pound American Staffordshire Terrier who thinks he’s a Pomeranian.

PICTURE | HDR adds a lot to the presentation, mostly by toning down the over-saturation seen in the HD version, while also bringing more consistency and subtlety to contrasts

© 2023 Cineluxe LLC